一个MapReduce计算写入多个输出_2

有时,我们要求Hadoop作业将数据写入多个输出位置。Hadoop提供了一种工具,可以根据我们的需要,使用MultipleOutputs类在不同的位置编写作业的输出。

Hadoop的MultipleOutputs类提供了将Hadoop map/reducer输出写到多个文件夹的工具。这个MultipleOutputs特性也允许我们为每个输出指定一个不同的OutputFormat。

MultipleOutputs类有一个静态方法addNamedOutput,用于向给定的作业添加指定的输出。该方法的签名如下:

public static void addNamedOutput(Job job,

String namedOutput,

Class<? extends OutputFormat> outputFormatClass,

Class<?> keyClass,

Class<?> valueClass)

只需要在MapClass或Reduce类中加入如下代码:

private MultipleOutputs<Text, IntWritable> mos;

public void setup(Context context) throws IOException,InterruptedException {

mos = new MultipleOutputs(context);

}

public void cleanup(Context context) throws IOException,InterruptedException {

mos.close();

}

然后就可以用mos.write(Key key,Value value,String baseOutputPath)代替context.write(key, value)。

问题描述

在这个例子中,我们将使用Hadoop MultipleOutputs特性将不同的日志分析结果写到不同的输出文件中。

在本例中,我们将为HTTP服务器日志项实现一个Hadoop Writable数据类型。 这里我们假定一个日志项由五部分组成:request host、timestamp、request URL、response size和HTTP状态码。如下所示:

192.168.0.2 - - [01/Jul/1995:00:00:01 -0400] "GET /history/apollo/HTTP/1.0" 200 6245

其中:

- 199.72.81.55 客户端用户的ip

- 01/Jul/1995:00:00:01 -0400 访问的时间

- GET HTTP方法,GET或POST

- /history/apollo/ 客户请求的URL

- 200 响应码 404

- 6245 响应内容的大小

一、创建Java Maven项目

Maven依赖:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>HadoopDemo</groupId>

<artifactId>com.xueai8</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<!--hadoop依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.3.1</version>

</dependency>

<!--hdfs文件系统依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.3.1</version>

</dependency>

<!--MapReduce相关的依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>3.3.1</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-jobclient</artifactId>

<version>3.3.1</version>

</dependency>

<!--junit依赖-->

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

<scope>test</scope>

</dependency>

</dependencies>

<build>

<plugins>

<!--编译器插件用于编译拓扑-->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<!--指定maven编译的jdk版本和字符集,如果不指定,maven3默认用jdk 1.5 maven2默认用jdk1.3-->

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.8</source> <!-- 源代码使用的JDK版本 -->

<target>1.8</target> <!-- 需要生成的目标class文件的编译版本 -->

<encoding>UTF-8</encoding><!-- 字符集编码 -->

</configuration>

</plugin>

</plugins>

</build>

</project>

LogWritable.java:

自定义 value 数据类型,需要实现 Writable 接口。

package com.xueai8.multioutput;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

*

* 自定义 value 数据类型,需要实现 Writable 接口

*/

public class LogWritable implements Writable {

private Text userIP; // 客户端的IP地址

private Text timestamp; // 客户访问时间

private Text url; // 客户访问的url

private IntWritable status; // 状态码

private IntWritable responseSize; // 服务端响应数据的大小

public LogWritable() {

this.userIP = new Text();

this.timestamp = new Text();

this.url = new Text();

this.status = new IntWritable();

this.responseSize = new IntWritable();

}

public void set(String userIP, String timestamp, String url, int status, int responseSize) {

this.userIP.set(userIP);

this.timestamp.set(timestamp);

this.url.set(url);

this.status.set(status);

this.responseSize.set(responseSize);

}

public Text getUserIP() {

return userIP;

}

public void setUserIP(Text userIP) {

this.userIP = userIP;

}

public Text getTimestamp() {

return timestamp;

}

public void setTimestamp(Text timestamp) {

this.timestamp = timestamp;

}

public Text getUrl() {

return url;

}

public void setUrl(Text url) {

this.url = url;

}

public IntWritable getStatus() {

return status;

}

public void setStatus(IntWritable status) {

this.status = status;

}

public IntWritable getResponseSize() {

return responseSize;

}

public void setResponseSize(IntWritable responseSize) {

this.responseSize = responseSize;

}

// 序列化方法

@Override

public void write(DataOutput out) throws IOException {

userIP.write(out);

timestamp.write(out);

url.write(out);

status.write(out);

responseSize.write(out);

}

// 反序列化方法

@Override

public void readFields(DataInput in) throws IOException {

userIP.readFields(in);

timestamp.readFields(in);

url.readFields(in);

status.readFields(in);

responseSize.readFields(in);

}

}

LogMapper.java:

Mapper类。

package com.xueai8.multioutput;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

/**

*

// 199.72.81.55 - - [01/Jul/1995:00:00:01 -0400] "GET /history/apollo/ HTTP/1.0" 200 6245

// "^(\\S+) (\\S+) (\\S+) \\[([\\w:/]+\\s[+\\-]\\d{4})\\] \"(.+?)\" (\\d{3}) (\\d+)"

// group(1) - ip

// group(4) - timestamp

// group(6) - status

// group(7) - responseSize

*/

public class LogMapper extends Mapper<LongWritable, Text, Text, LogWritable>{

private final Text outKey = new Text();

private final LogWritable outValue = new LogWritable(); // 自定义Writable类型

@Override

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

// 提取相应字段的正则表达式

String regexp = "^(\\S+) (\\S+) (\\S+) \\[([\\w:/]+\\s[+\\-]\\d{4})\\] \"(.+?)\" (\\d{3}) (\\d+)";

Pattern pattern = Pattern.compile(regexp);

Matcher matcher = pattern.matcher(value.toString());

if(!matcher.matches()) {

System.out.println("不是一个有效的日志记录");

return;

}

// 提取相应的字段

String ip = matcher.group(1);

String timestamp = matcher.group(4);

String url = matcher.group(5);

int status = Integer.parseInt(matcher.group(6));

int responseSize = Integer.parseInt(matcher.group(7));

// LogWritable为 value

outValue.set(ip, timestamp, url, status, responseSize);

outKey.set(ip); // ip 为key

context.write(outKey, outValue); // 写出

}

}

LogMultiOutputReducer.java:

Reducer类。计算每个IP的下载量,以及每个IP的访问时间。

package com.xueai8.multioutput;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.output.MultipleOutputs;

import java.io.IOException;

/**

*

* 计算每个IP的下载量,以及每个IP的访问时间。

*/

public class LogMultiOutputReducer extends Reducer<Text, LogWritable, Text, IntWritable> {

private final IntWritable outValue = new IntWritable(0);

private MultipleOutputs<Text, IntWritable> mos;

@Override

protected void setup(Context context) throws IOException, InterruptedException {

mos = new MultipleOutputs<>(context);

}

@Override

public void reduce(Text key, Iterable<LogWritable> values, Context context)

throws IOException, InterruptedException {

int sum = 0;

for (LogWritable val : values) {

sum += val.getResponseSize().get(); // HTTP响应数据大小

// 写出。参数分别为:输出命名,key,value

mos.write("timestamps", key, val.getTimestamp());

}

outValue.set(sum);

mos.write("responsesizes", key, outValue);

}

@Override

public void cleanup(Context context) throws IOException,InterruptedException {

mos.close(); // 在这里要关闭MultipleOutputs

}

}

LogMultiOutputDriver.java:

驱动程序类。注意这里我们使用了ToolRunner接口。

package com.xueai8.multioutput;

import com.xueai8.custominput.LogFileInputFormat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.MultipleOutputs;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

/**

*

* 指定多个命名输出

*/

public class LogMultiOutputDriver extends Configured implements Tool {

public static void main(String[] args) throws Exception {

int res = ToolRunner.run(new Configuration(), new LogMultiOutputDriver(), args);

System.exit(res);

}

@Override

public int run(String[] args) throws Exception {

if (args.length < 2) {

System.err.println("语法: <input_path> <output_path>");

System.exit(-1);

}

// 提取执行参数中的输入路径和输出路径

String[] otherArgs = new GenericOptionsParser(getConf(),args).getRemainingArgs();

Path input=new Path(otherArgs[0]);

Path output=new Path(otherArgs[1]);

Job job = Job.getInstance(getConf(), "log-analysis");

job.setJarByClass(LogMultiOutputDriver.class);

// set mapper

job.setMapperClass(LogMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(LogWritable.class);

// set reducer

job.setReducerClass(LogMultiOutputReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// job.setInputFormatClass(LogFileInputFormat.class);

FileInputFormat.setInputPaths(job, input);

FileOutputFormat.setOutputPath(job, output);

// 为job配置命名输出

MultipleOutputs.addNamedOutput(job, "responsesizes", TextOutputFormat.class, Text.class, IntWritable.class);

MultipleOutputs.addNamedOutput(job, "timestamps", TextOutputFormat.class, Text.class, Text.class);

boolean success = job.waitForCompletion(true);

return (success ? 0 : 1);

}

}

二、配置log4j

在src/main/resources目录下新增log4j的配置文件log4j.properties,内容如下:

log4j.rootLogger = info,stdout

### 输出信息到控制抬 ###

log4j.appender.stdout = org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Target = System.out

log4j.appender.stdout.layout = org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern = [%-5p] %d{yyyy-MM-dd HH:mm:ss,SSS} method:%l%n%m%n

三、项目打包

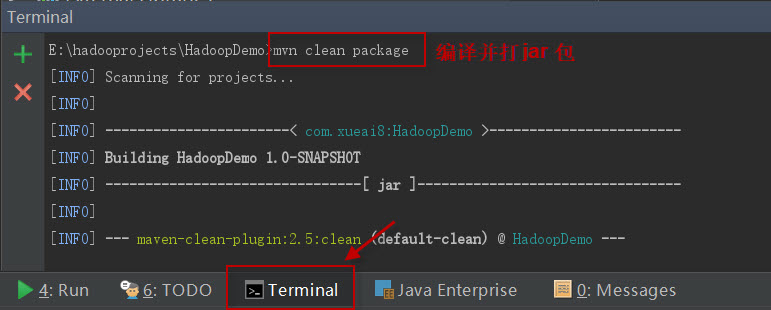

打开IDEA下方的终端窗口terminal,执行"mvn clean package"打包命令,如下图所示:

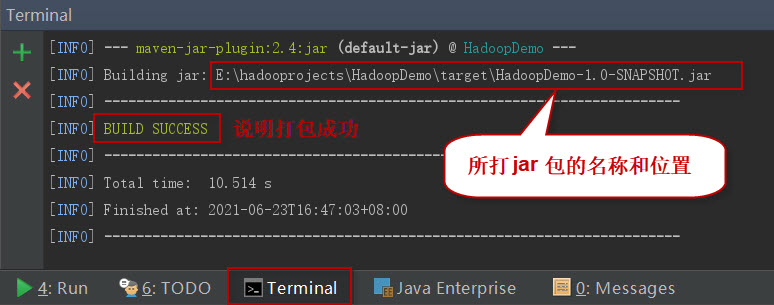

如果一切正常,会提示打jar包成功。如下图所示:

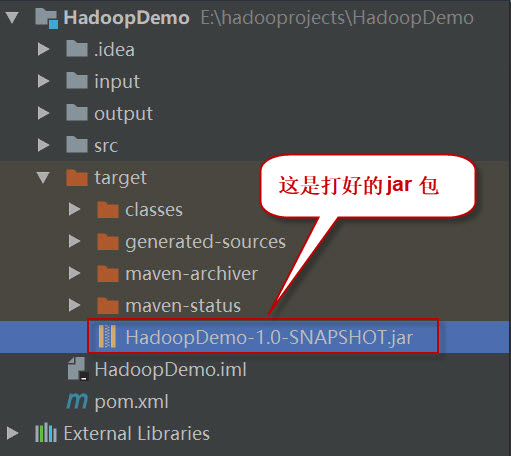

这时查看项目结构,会看到多了一个target目录,打好的jar包就位于此目录下。如下图所示:

四、项目部署

请按以下步骤执行。

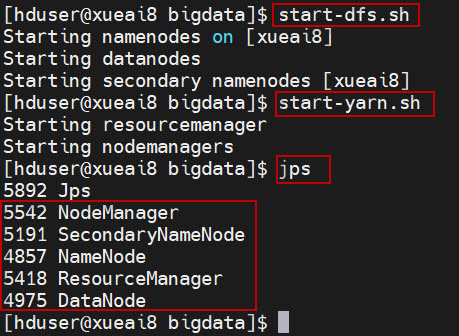

1、启动HDFS集群和YARN集群。在Linux终端窗口中,执行如下的脚本:

$ start-dfs.sh $ start-yarn.sh

查看进程是否启动,集群运行是否正常。在Linux终端窗口中,执行如下的命令:

$ jps

这时应该能看到有如下5个进程正在运行,说明集群运行正常:

5542 NodeManager

5191 SecondaryNameNode

4857 NameNode

5418 ResourceManager

4975 DataNode

2、将日志文件log_sample.txt上传到HDFS的/data/mr/目录下。

$ hdfs dfs -mkdir -p /data/mr $ hdfs dfs -put log_sample.txt /data/mr/ $ hdfs dfs -ls /data/mr/

4、提交作业到Hadoop集群上运行。(如果jar包在Windows下,请先拷贝到Linux中。)

在终端窗口中,执行如下的作业提交命令:

$ hadoop jar HadoopDemo-1.0-SNAPSHOT.jar com.xueai8.multioutput.LogMultiOutputDriver /data/mr /data/mr-output

5、查看输出结果。

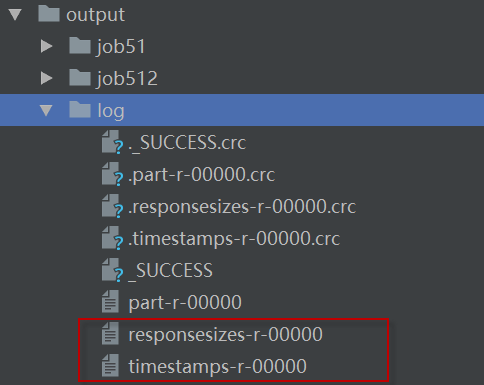

查看输出目录,可以看到每个命名输出写到一个单独的文件夹中了。如下图所示: