使用Hadoop ArrayWritable数据类型

ArrayWritable:存储Writable类型的值的数组。 要想使用ArrayWritable类型作为Reducer的输入的value类型,我们需要创建一个ArrayWritable的子类来指定它要存储的Writable值的类型。 例如,如果我们需要一个能够存储多个LongWritable数据的数据类型,可以象下面这们定义一人:

public class LongArrayWritable extends ArrayWritable {

public LongArrayWritable() {

super(LongWritable.class);

}

}

-----------------------------------------------------------------------------------------

案例描述

现在有如下的员工信息:

这些信息存储在数据文件input.txt中,如下:

1201,gopal,45,Male,50000 1202,manisha,40,Female,51000 1203,khaleel,34,Male,30000 1204,prasanth,30,Male,31000 1205,kiran,20,Male,40000 1206,laxmi,25,Female,35000 1207,bhavya,20,Female,15000 1208,reshma,19,Female,14000 1209,kranthi,22,Male,22000 1210,Satish,24,Male,25000 1211,Krishna,25,Male,26000 1212,Arshad,28,Male,20000 1213,lavanya,18,Female,8000

要求编写MapReduce应用程序,处理输入数据集,按性别找出不同年龄组中最高薪水的员工 (例如, 小于20岁, 21岁至30岁之间, 大于30岁),并输出。

一、创建Java Maven项目

Maven依赖:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>HadoopDemo</groupId>

<artifactId>com.xueai8</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<!--hadoop依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.3.1</version>

</dependency>

<!--hdfs文件系统依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.3.1</version>

</dependency>

<!--MapReduce相关的依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>3.3.1</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-jobclient</artifactId>

<version>3.3.1</version>

</dependency>

<!--junit依赖-->

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

<scope>test</scope>

</dependency>

</dependencies>

<build>

<plugins>

<!--编译器插件用于编译拓扑-->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<!--指定maven编译的jdk版本和字符集,如果不指定,maven3默认用jdk 1.5 maven2默认用jdk1.3-->

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.8</source> <!-- 源代码使用的JDK版本 -->

<target>1.8</target> <!-- 需要生成的目标class文件的编译版本 -->

<encoding>UTF-8</encoding><!-- 字符集编码 -->

</configuration>

</plugin>

</plugins>

</build>

</project>

IntArrayWritable.java:

要想使用ArrayWritable类型作为Reducer的输入的value类型,我们需要创建一个ArrayWritable的子类来指定它要存储的Writable值的类型。

package com.xueai8.writable;

import org.apache.hadoop.io.ArrayWritable;

import org.apache.hadoop.io.IntWritable;

/**

*

* 定义一个ArrayWritable的子类

*/

public class IntArrayWritable extends ArrayWritable {

public IntArrayWritable() {

super(IntWritable.class);

}

public void set(String[] values) {

IntWritable[] text = new IntWritable[values.length];

for (int i = 0; i < values.length; i++) {

text[i] = new IntWritable(Integer.parseInt(values[i]));

}

super.set(text);

}

}

IntArrayMapper.java:

package com.xueai8.writable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class IntArrayMapper extends Mapper<LongWritable, Text, Text, IntArrayWritable> {

// 定义可重用的Hadoop类型

private final Text genderKey = new Text();

private final IntArrayWritable manyIntValue = new IntArrayWritable();

// 输入一行: 1201,gopal,45,Male,50000

@Override

public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] str = value.toString().split(",", -1);

String[] output = { str[2], str[4] }; // 年龄,薪资

genderKey.set(str[3]); // 性别为key

manyIntValue.set(output); // [年龄,薪资]为value

context.write(genderKey, manyIntValue); // 写出

}

}

CaderPartitioner.java:自定义分区器

package com.xueai8.writable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

// 根据给定的分区条件,输入的key-value对数据基于年龄条件可以被分为三部分

public class CaderPartitioner extends Partitioner<Text, IntArrayWritable> {

// 输入数据格式:<性别, [年龄,薪资]>

@Override

public int getPartition(Text key, IntArrayWritable value, int numReduceTasks) {

IntWritable[] text = (IntWritable[]) value.get(); // 年龄,薪资

int age = text[0].get(); // 年龄

if (numReduceTasks == 0) {

return 0;

}

if (age <= 20) {

return 0;

} else if (age <= 30) {

return 1 % numReduceTasks;

} else {

return 2 % numReduceTasks;

}

}

}

IntArrayReducer.java:

package com.xueai8.writable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.Writable;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class IntArrayReducer extends Reducer<Text, IntArrayWritable, Text, IntWritable> {

private final Text outKey = new Text();

private final IntWritable outValue = new IntWritable(0);

// 输入数据格式:<性别, [年龄,薪资]>

@Override

public void reduce(Text key, Iterable<IntArrayWritable> values, Context context)

throws IOException, InterruptedException {

int max = -1;

// 遍历每一个[年龄,薪资]

for (IntArrayWritable val : values) {

Writable[] str = val.get();

IntWritable salary = (IntWritable)str[1]; // 取薪资

if (salary.get() > max) {

max = salary.get();

}

}

outKey.set(key);

outValue.set(max);

context.write(outKey, outValue);

}

}

IntArrayDriver.java:

package com.xueai8.writable;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

public class IntArrayDriver {

public static void main(String[] args) throws ClassNotFoundException, IOException, InterruptedException {

if (args.length < 2) {

System.err.println("语法: IntArrayDriver <input_path> <output_path>");

System.exit(-1);

}

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "PartitionerDemo");

job.setJarByClass(IntArrayDriver.class);

// set mapper

job.setMapperClass(IntArrayMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntArrayWritable.class);

// 设置自定义分区器

job.setPartitionerClass(CaderPartitioner.class);

// set reducer

job.setReducerClass(IntArrayReducer.class);

job.setNumReduceTasks(3); // 设置3个分区

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

job.setInputFormatClass(TextInputFormat.class);

job.setOutputFormatClass(TextOutputFormat.class);

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

System.exit(job.waitForCompletion(true) ? 0 : -1);

}

}

二、配置log4j

在src/main/resources目录下新增log4j的配置文件log4j.properties,内容如下:

log4j.rootLogger = info,stdout

### 输出信息到控制抬 ###

log4j.appender.stdout = org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Target = System.out

log4j.appender.stdout.layout = org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern = [%-5p] %d{yyyy-MM-dd HH:mm:ss,SSS} method:%l%n%m%n

三、项目打包

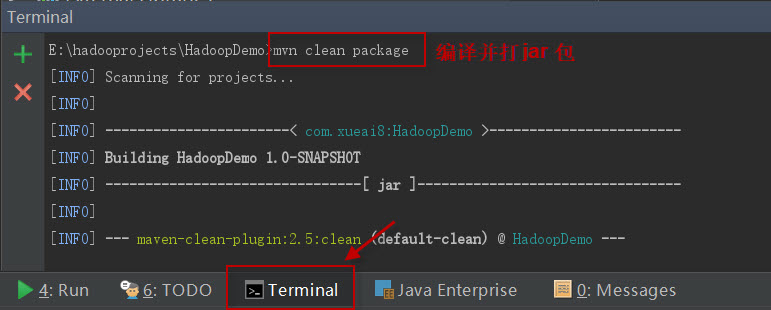

打开IDEA下方的终端窗口terminal,执行"mvn clean package"打包命令,如下图所示:

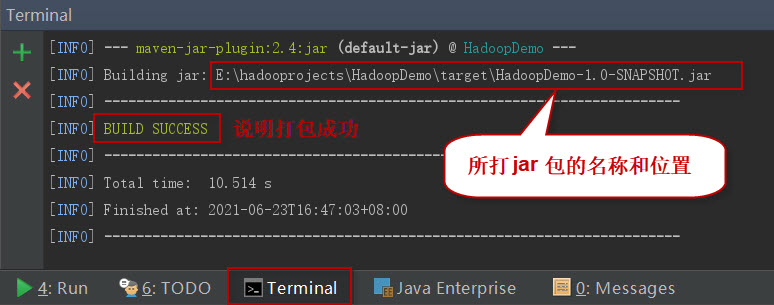

如果一切正常,会提示打jar包成功。如下图所示:

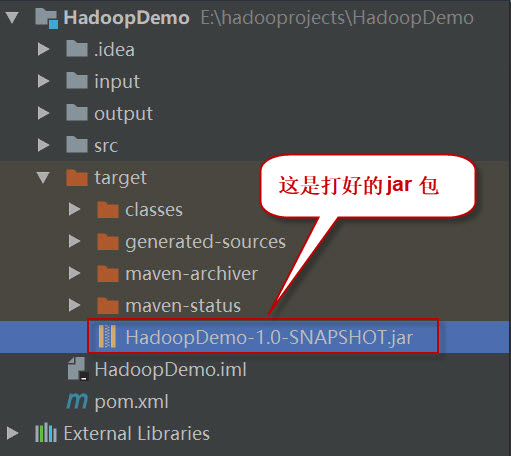

这时查看项目结构,会看到多了一个target目录,打好的jar包就位于此目录下。如下图所示:

四、项目部署

请按以下步骤执行。

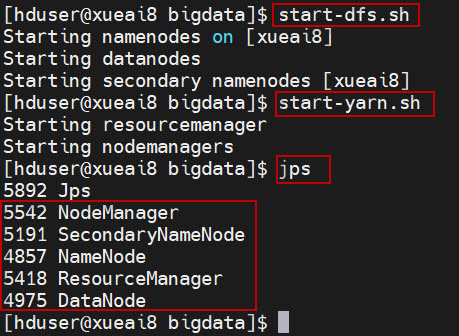

1、启动HDFS集群和YARN集群。在Linux终端窗口中,执行如下的脚本:

$ start-dfs.sh $ start-yarn.sh

查看进程是否启动,集群运行是否正常。在Linux终端窗口中,执行如下的命令:

$ jps

这时应该能看到有如下5个进程正在运行,说明集群运行正常:

5542 NodeManager

5191 SecondaryNameNode

4857 NameNode

5418 ResourceManager

4975 DataNode

2、将数据文件sample.txt上传到HDFS的/data/mr/目录下。

$ hdfs dfs -mkdir -p /data/mr $ hdfs dfs -put input.txt /data/mr/ $ hdfs dfs -ls /data/mr/

3、提交作业到Hadoop集群上运行。(如果jar包在Windows下,请先拷贝到Linux中。)

在终端窗口中,执行如下的作业提交命令:

$ hadoop jar com.xueai8-1.0-SNAPSHOT.jar com.xueai8.writable.IntArrayDriver /data/mr /data/mr-output

4、查看输出结果。

在终端窗口中,执行如下的HDFS命令,查看输出结果:

$ hdfs dfs -ls /data/mr-output

会发现生成了三个输出结果文件,每个reducer(每个分区)对应一个输出文件。

查看第一个结果文件中的内容:

$ hdfs dfs -cat /data/mr-output/part-r-00000

可以看到如下的输出结果:

Female 15000 Male 40000

查看第二个结果文件中的内容:

$ hdfs dfs -cat /data/mr-output/part-r-00001

可以看到如下的输出结果:

Female 35000 Male 31000

查看第三个结果文件中的内容:

$ hdfs dfs -cat /data/mr-output/part-r-00002

可以看到如下的输出结果:

Female 51000 Male 50000