从Mapper发出不同value类型的数据

当执行Reducer端的join时,为了避免在有多个MapReduce计算在汇总一个数据集中不同属性类型时的复杂性时,从Mapper发射属于多种value类型的数据是很有用的。但是,Hadoop Reducer并不允许多个input value类型。在这种场景下,我们可以使用GenericWritable类来包装多个属于不同数据类型的value实例。

ObjectWritable 和GenericWritable

ObjectWritable是用于以下类型的通用包装器:Java基本数据类型、String、enum、Writable、null或任何这些类型的数组。它在Hadoop RPC中用于编组和解编组方法参数和返回类型。

当字段可以是多种类型时,ObjectWritable非常有用。例如,如果SequenceFile中的值有多个类型,则可以将值类型声明为ObjectWritable,并将每种类型包装在ObjectWritable中。不过,作为一种通用机制,它浪费了相当多的空间,因为它在每次序列化包装的类型时都编写类名。

为了克服这个缺点,Hadoop提供了GenericWritable类。在类型数量较小且提前知道的情况下,GenericWritable通过使用类型的静态数组并使用数组中的索引作为对类型的序列化引用来改进这个不足。在使用GenericWritable时,必须将其子类化以指定要支持的类型。

案例描述

在这一节,我们重用HTTP服务器日志项分析。不过,这次我们不用自定义的数据类型,而是从Mapper输出多个不同的value类型。

在这个示例中,聚合来自web服务器的字节总数量到一个特定的host,并输出由该特定host请求的一个URL列表(用tab分隔)。

这里我们假定一个日志项由五部分组成:request host、timestamp、request URL、response size和HTTP状态码。如下所示:

192.168.0.2 - - [01/Jul/1995:00:00:01 -0400] "GET /history/apollo/HTTP/1.0" 200 6245

其中:

- 199.72.81.55 客户端用户的ip

- 01/Jul/1995:00:00:01 -0400 访问的时间

- GET HTTP方法,GET或POST

- /history/apollo/ 客户请求的URL

- 200 响应码 404

- 6245 响应内容的大小

要求:统计每个IP请求下载的总字节数,并输出每个IP对应请求的多个URL列表(用tab分隔)。

思路

使用IntWritable来输出来自Mapper的字节数量,使用Text来输出请求的URL。

我们需要实现一个Hadoop GenericWritable数据类型,它能包装IntWritable数据类型实例或Text数据类型实例。

一、创建Java Maven项目

Maven依赖:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>HadoopDemo</groupId>

<artifactId>com.xueai8</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<!--hadoop依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.3.1</version>

</dependency>

<!--hdfs文件系统依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.3.1</version>

</dependency>

<!--MapReduce相关的依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>3.3.1</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-jobclient</artifactId>

<version>3.3.1</version>

</dependency>

<!--junit依赖-->

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

<scope>test</scope>

</dependency>

</dependencies>

<build>

<plugins>

<!--编译器插件用于编译拓扑-->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<!--指定maven编译的jdk版本和字符集,如果不指定,maven3默认用jdk 1.5 maven2默认用jdk1.3-->

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.8</source> <!-- 源代码使用的JDK版本 -->

<target>1.8</target> <!-- 需要生成的目标class文件的编译版本 -->

<encoding>UTF-8</encoding><!-- 字符集编码 -->

</configuration>

</plugin>

</plugins>

</build>

</project>

LogWritable.java:

首先,编写一个继承自org.apache.hadoop.io.GenericWritable的类,实现getTypes()方法,返回一个CLASSES数组来指定一系列要包装的Writable value类型。

如果增加了自定义的构造器的话,要确保有一个无参的默认构造器。

package com.xueai8.logmultivalue;

import org.apache.hadoop.io.GenericWritable;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.Writable;

public class MultiValueWritable extends GenericWritable {

private static Class[] CLASSES = new Class[]{

IntWritable.class,

Text.class

};

// 要确保有一个无参的默认构造器

public MultiValueWritable(){

}

public MultiValueWritable(Writable value){

set(value);

}

@Override

protected Class[] getTypes() {

return CLASSES;

}

}

LogMapper.java:

设置MultiValueWritable作为Mapper的输出的value类型。使用MultiValueWritable类的实例包装Mapper输出的Writable values。

package com.xueai8.logmultivalue;

import java.io.IOException;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

/**

*

* 192.168.0.2 - - [01/Jul/1995:00:00:01 -0400] "GET /history/apollo/HTTP/1.0" 200 6245

*/

public class LogMapper extends Mapper<Object, Text, Text, MultiValueWritable> {

private Text userHostText = new Text(); // 存 host

private Text requestUrl = new Text(); // 存 url

private IntWritable bytesWritable = new IntWritable(); // 存 size

@Override

public void map(Object key, Text value, Context context)

throws IOException, InterruptedException {

// 日志匹配模式(正则表达式)

// "^(\\S+) (\\S+) (\\S+) \\[([\\w:/]+\\s[+\\-]\\d{4})\\] \"(.+?)\" (\\d{3}) (\\d+)"

String logEntryPattern = "^(\\S+) (\\S+) (\\S+) \\[([\\w:/]+\\s[+\\-]\\d{4})\\] \"(.+?)\" (\\d{3}) (\\d+)";

Pattern p = Pattern.compile(logEntryPattern);

Matcher matcher = p.matcher(value.toString());

if (!matcher.matches()) {

return;

}

String userHost = matcher.group(1); // 提取 ip 地址

userHostText.set(userHost); // ip 地址为key

// 输出两个value类型...

String request = matcher.group(5); // 提取 url

requestUrl.set(request);

int bytes = Integer.parseInt(matcher.group(7)); // 提取文件大小

bytesWritable.set(bytes);

// 写出

context.write(userHostText, new MultiValueWritable(requestUrl));

context.write(userHostText, new MultiValueWritable(bytesWritable));

}

}

LogReducer.java:

设置Reducer输入value类型为MultiValueWritable。实现reduce()方法处理多个value类型。

package com.xueai8.logmultivalue;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.Writable;

import org.apache.hadoop.mapreduce.Reducer;

public class LogReducer extends Reducer<Text, MultiValueWritable, Text, Text> {

private Text result = new Text();

@Override

public void reduce(Text key, Iterable<MultiValueWritable> values, Context context)

throws IOException, InterruptedException {

int sum = 0;

StringBuilder requests = new StringBuilder();

for (MultiValueWritable multiValueWritable : values) {

Writable writable = multiValueWritable.get();

if (writable instanceof IntWritable) { // 如果文件大小,则累加

sum += ((IntWritable) writable).get();

} else { // 如果是 url

requests.append(((Text) writable).toString());

requests.append("\t");

}

}

// 写出

result.set(sum + "\t" + requests);

context.write(key, result);

}

}

LogDriver.java:

作为输入,这个应用程序可以接收任何文本文件。可直接从IDE运行LogDriver类并传递input和output作为参数。

package com.xueai8.logmultivalue;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class LogDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

if (args.length < 2) {

System.err.println("用法: <input_path> <output_path> ");

// System.err.println("用法: <input_path> <output_path> <num_reduce_tasks>");

System.exit(-1);

}

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "log-analysis");

job.setJarByClass(LogDriver.class);

job.setMapperClass(LogMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(MultiValueWritable.class); // 设置MultiValueWritable作为本次计算的Map输出value类

job.setReducerClass(LogReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

// int numReduce = Integer.parseInt(args[2]);

// job.setNumReduceTasks(numReduce);

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

二、配置log4j

在src/main/resources目录下新增log4j的配置文件log4j.properties,内容如下:

log4j.rootLogger = info,stdout

### 输出信息到控制抬 ###

log4j.appender.stdout = org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Target = System.out

log4j.appender.stdout.layout = org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern = [%-5p] %d{yyyy-MM-dd HH:mm:ss,SSS} method:%l%n%m%n

三、项目打包

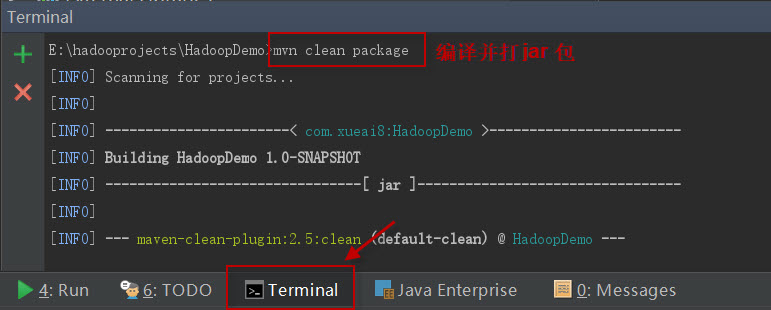

打开IDEA下方的终端窗口terminal,执行"mvn clean package"打包命令,如下图所示:

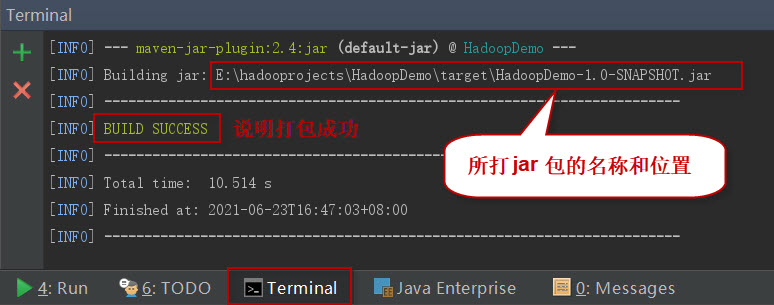

如果一切正常,会提示打jar包成功。如下图所示:

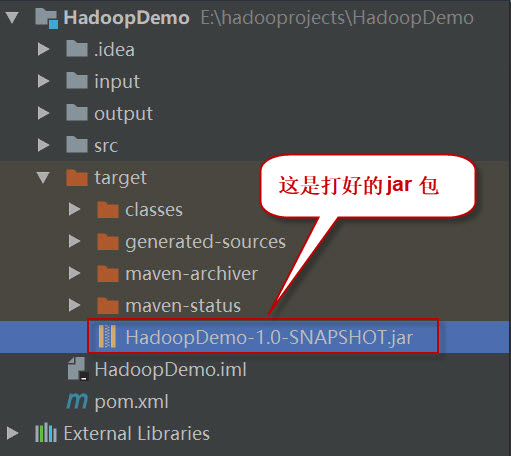

这时查看项目结构,会看到多了一个target目录,打好的jar包就位于此目录下。如下图所示:

四、项目部署

请按以下步骤执行。

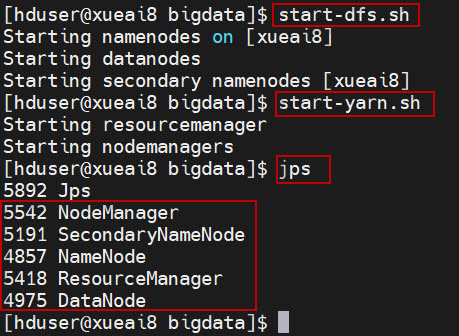

1、启动HDFS集群和YARN集群。在Linux终端窗口中,执行如下的脚本:

$ start-dfs.sh $ start-yarn.sh

查看进程是否启动,集群运行是否正常。在Linux终端窗口中,执行如下的命令:

$ jps

这时应该能看到有如下5个进程正在运行,说明集群运行正常:

5542 NodeManager

5191 SecondaryNameNode

4857 NameNode

5418 ResourceManager

4975 DataNode

2、将日志数据文件log_sample.txt上传到HDFS的/data/mr/目录下。

$ hdfs dfs -mkdir -p /data/mr $ hdfs dfs -put log_sample.txt /data/mr/ $ hdfs dfs -ls /data/mr/

3、提交作业到Hadoop集群上运行。(如果jar包在Windows下,请先拷贝到Linux中。)

在终端窗口中,执行如下的作业提交命令:

$ hadoop jar HadoopDemo-1.0-SNAPSHOT.jar com.xueai8.logmultivalue.LogDriver /data/mr /data/mr-output

4、查看输出结果。

在终端窗口中,执行如下的HDFS命令,查看输出结果:

$ hdfs dfs -ls /data/mr-output

$ hdfs dfs -cat /data/mr-output/part-r-00000

可以看到最后的统计结果如下:

129.94.144.152 7074 GET /images/ksclogo-medium.gif HTTP/1.0 GET / HTTP/1.0 199.120.110.21 9977 GET /shuttle/missions/sts-73/mission-sts-73.html HTTP/1.0 GET /images/launch-logo.gif HTTP/1.0 GET /shuttle/missions/sts-73/sts-73-patch-small.gif HTTP/1.0 199.72.81.55 21833 GET / HTTP/1.0 GET /history/apollo/ HTTP/1.0 GET /history/ HTTP/1.0 GET /images/WORLD-logosmall.gif HTTP/1.0 GET /images/USA-logosmall.gif HTTP/1.0 GET /images/MOSAIC-logosmall.gif HTTP/1.0 GET /images/ksclogo-medium.gif HTTP/1.0 205.189.154.54 55253 GET /images/NASA-logosmall.gif HTTP/1.0 GET /shuttle/countdown/ HTTP/1.0 GET /images/KSC-logosmall.gif HTTP/1.0 GET /cgi-bin/imagemap/countdown?99,176 HTTP/1.0 GET /shuttle/missions/sts-71/images/images.html HTTP/1.0 GET /shuttle/countdown/count.gif HTTP/1.0 GET /shuttle/missions/sts-71/images/KSC-95EC-0423.txt HTTP/1.0 205.212.115.106 11619 GET /shuttle/missions/sts-71/images/images.html HTTP/1.0 GET /shuttle/countdown/countdown.html HTTP/1.0 alyssa.prodigy.com 12054 GET /shuttle/missions/sts-71/sts-71-patch-small.gif HTTP/1.0 burger.letters.com 0 GET /images/NASA-logosmall.gif HTTP/1.0 GET /shuttle/countdown/liftoff.html HTTP/1.0 GET /shuttle/countdown/video/livevideo.gif HTTP/1.0 d104.aa.net 46285 GET /images/KSC-logosmall.gif HTTP/1.0 GET /shuttle/countdown/ HTTP/1.0 GET /shuttle/countdown/count.gif HTTP/1.0 GET /images/NASA-logosmall.gif HTTP/1.0 dave.dev1.ihub.com 46285 GET /shuttle/countdown/count.gif HTTP/1.0 GET /shuttle/countdown/ HTTP/1.0 GET /images/NASA-logosmall.gif HTTP/1.0 GET /images/KSC-logosmall.gif HTTP/1.0 dd14-012.compuserve.com 42732 GET /shuttle/technology/images/srb_16-small.gif HTTP/1.0 dial22.lloyd.com 61716 GET /shuttle/missions/sts-71/images/KSC-95EC-0613.jpg HTTP/1.0 gater3.sematech.org 41514 GET /images/KSC-logosmall.gif HTTP/1.0 GET /shuttle/countdown/count.gif HTTP/1.0 gater4.sematech.org 4771 GET /images/NASA-logosmall.gif HTTP/1.0 GET /shuttle/countdown/ HTTP/1.0 gayle-gaston.tenet.edu 12040 GET /shuttle/missions/sts-71/mission-sts-71.html HTTP/1.0 ix-or10-06.ix.netcom.com 10149 GET /software/winvn/userguide/wvnguide.gif HTTP/1.0 GET /software/winvn/userguide/wvnguide.html HTTP/1.0 ix-orl2-01.ix.netcom.com 45499 GET /shuttle/countdown/count.gif HTTP/1.0 GET /shuttle/countdown/ HTTP/1.0 GET /images/KSC-logosmall.gif HTTP/1.0 link097.txdirect.net 51128 GET /shuttle/missions/missions.html HTTP/1.0 GET /images/launchmedium.gif HTTP/1.0 GET /images/NASA-logosmall.gif HTTP/1.0 GET /images/KSC-logosmall.gif HTTP/1.0 GET /shuttle/missions/sts-78/mission-sts-78.html HTTP/1.0 GET /shuttle/missions/sts-78/sts-78-patch-small.gif HTTP/1.0 GET /images/launch-logo.gif HTTP/1.0 GET /shuttle/resources/orbiters/columbia.html HTTP/1.0 GET /shuttle/resources/orbiters/columbia-logo.gif HTTP/1.0 net-1-141.eden.com 34029 GET /shuttle/missions/sts-71/images/KSC-95EC-0916.jpg HTTP/1.0 netport-27.iu.net 7074 GET / HTTP/1.0 onyx.southwind.net 44295 GET /shuttle/countdown/count.gif HTTP/1.0 GET /images/KSC-logosmall.gif HTTP/1.0 GET /shuttle/countdown/countdown.html HTTP/1.0 piweba3y.prodigy.com 67720 GET /shuttle/technology/images/srb_mod_compare_3-small.gif HTTP/1.0 GET /shuttle/missions/sts-71/sts-71-patch-small.gif HTTP/1.0 pm13.j51.com 305722 GET /shuttle/missions/sts-71/movies/crew-arrival-t38.mpg HTTP/1.0 port26.annex2.nwlink.com 56782 GET /images/MOSAIC-logosmall.gif HTTP/1.0 GET /software/winvn/winvn.html HTTP/1.0 GET /software/winvn/winvn.gif HTTP/1.0 GET /images/construct.gif HTTP/1.0 GET /software/winvn/bluemarb.gif HTTP/1.0 GET /software/winvn/wvsmall.gif HTTP/1.0 GET /images/WORLD-logosmall.gif HTTP/1.0 GET /images/USA-logosmall.gif HTTP/1.0 GET /images/KSC-logosmall.gif HTTP/1.0 ppp-mia-30.shadow.net 14992 GET /images/NASA-logosmall.gif HTTP/1.0 GET / HTTP/1.0 GET /images/ksclogo-medium.gif HTTP/1.0 GET /images/WORLD-logosmall.gif HTTP/1.0 GET /images/USA-logosmall.gif HTTP/1.0 GET /images/MOSAIC-logosmall.gif HTTP/1.0 ppp-nyc-3-1.ios.com 129654 GET /shuttle/missions/sts-71/images/KSC-95EC-0917.jpg HTTP/1.0 GET /shuttle/missions/sts-71/images/KSC-95EC-0882.jpg HTTP/1.0 ppptky391.asahi-net.or.jp 15450 GET /images/launchpalms-small.gif HTTP/1.0 GET /facts/about_ksc.html HTTP/1.0 remote27.compusmart.ab.ca 23783 GET /shuttle/countdown/ HTTP/1.0 GET /shuttle/missions/sts-71/sts-71-patch-small.gif HTTP/1.0 GET /cgi-bin/imagemap/countdown?102,174 HTTP/1.0 GET /shuttle/missions/sts-71/images/images.html HTTP/1.0 scheyer.clark.net 49152 GET /shuttle/missions/sts-71/movies/sts-71-mir-dock-2.mpg HTTP/1.0 slip1.yab.com 23159 GET /shuttle/resources/orbiters/endeavour.gif HTTP/1.0 GET /shuttle/resources/orbiters/endeavour.html HTTP/1.0 smyth-pc.moorecap.com 121677 GET /history/apollo/apollo-13/images/70HC314.GIF HTTP/1.0 GET /history/apollo/apollo-spacecraft.txt HTTP/1.0 GET /history/apollo/images/footprint-small.gif HTTP/1.0 unicomp6.unicomp.net 49499 GET /shuttle/countdown/ HTTP/1.0 GET /images/KSC-logosmall.gif HTTP/1.0 GET /htbin/cdt_main.pl HTTP/1.0 GET /shuttle/countdown/count.gif HTTP/1.0 GET /images/NASA-logosmall.gif HTTP/1.0 waters-gw.starway.net.au 6723 GET /shuttle/missions/51-l/mission-51-l.html HTTP/1.0 www-a1.proxy.aol.com 3985 GET /shuttle/countdown/ HTTP/1.0 www-b4.proxy.aol.com 70712 GET /shuttle/countdown/video/livevideo.gif HTTP/1.0